Disrupting Hell: Accountability for the Non-believer

by Professor Clayton M. Christensen, Craig Hatkoff and Rabbi Irwin Kula

For millennia religion has been one of civilization’s primary distribution channels for moral and ethical development that both helps us determine what is right and wrong and that creates accountability for our actions. Yet, for an increasing number of people religion is no longer getting these two critical jobs done. We argue that without a strong moral and ethical foundation civilization’s prospects for peace and prosperity will remain elusive and at some point simply founder. Perhaps we need innovation in “moral and ethical” products, services and delivery systems for non-consumers of traditional religion.

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

The effective functioning of capitalism and democracy depends not only upon clear rules and mechanisms for accountability for obvious transgressions but also upon the people voluntarily obeying “unenforceable” rules– i.e. behaviors that occur when no one is watching. America’s market economy has worked largely because its people have chosen to obey the rules even when they can’t or are highly unlikely to be caught. Why is that? Mid-nineteenth century de Toqueville offered one explanation: an individual’s fear of being eternally relegated to the netherworld strongly discouraged bad behavior. He warns, “when men have once allowed themselves to think no more of what is to befall them after life, they readily lapse into that complete and brutal indifference to futurity.”

On the Great Seal of the United States, our Founders included the Divine Seeing Eye that determined whether we would be rewarded or punished in the next life. But what happens when more and more people stop believing in that Divine Seeing Eye? Albeit in various forms, a temporal Seeing Eye starts to emerge: Big Brother is watching. Almost all successful market economies have historically had strong religious underpinnings with the notable exceptions of Singapore and China. Those two particular regimes have relied upon command and control societies that function very effectively even without religion. Everyone is watched and everything is seen; justice for transgressions is meted out swiftly and severely. With limited due process, bad behavior carries serious consequences– not exactly the environment where most of Americans would want to live. But what happens when people neither believe in the Divine Seeing Eye nor want to live under a temporal Seeing Eye? Who or what will hold us accountable? Perhaps we need another kind of Seeing Eye– the Seeing I.”

Religion has wielded a powerful set of accountability technologies such as the promise of reward (Heaven) and the threat of punishment (Hell). Yet today, religion’s role in fostering personal accountability is no longer getting the job done for a rapidly increasing number people; nearly one-third of the population under 35 sees no meaningful role for religion in their lives. So what set of moral and ethical principles, codes, rules and practices will hold behavior in check? And how will these be delivered if religion is no longer the primary distribution channel for moral and ethical conduct for a wide swath of civil society?

Disruptive innovation in religion can help find alternative products and delivery systems that cultivate moral and ethical behavior. These new systems will emerge via widely distributed bottom-up movements and processes starting with the intuitions of a new breed of early moral adopters. These early adopters will need to reach non-consumers of our traditional moral and ethical products and services in unconventional ways. Disrupting religion can help find alternative delivery systems for moral and ethical behavior.

Religions are facing serious product and distribution issues. Just as businesses are subject to the forces of disruptive innovation, religions’ “business models” are also subject to threats to their sustainability in addition to other challenges to their well-established business principles: percentage utilization versus total capacity (e.g. what to do about those empty pews), marginal costs exceeding marginal revenues (where profitable activities prop up the less profitable or money losers altogether). Like the cable companies who face cord cutting, religions are also facing pressure from product unbundling and disaggregation and an overall declining market share from changing demographics. Their expensive bricks and mortar distribution models create exorbitantly high fixed costs of operations.

Religions have become subject to the condition we call “creeping feature-itis” (too many features, too inaccessible, too much expertise required, too complicated, too expensive for many; religions might be well advised to revisit the jobs that need to get done and to find new ways to reach the non-consumer. Disruptive Innovation Theory can help understand the market dynamics and develop new strategies and approaches. The incumbents in religion need to disrupt themselves or, otherwise, they too will face the music and be disrupted by external forces. The onslaught will likely be from with low-end, user-friendly products and more efficient delivery systems that are good enough get the job done: to help us create a more ethical and moral society with real personal accountability.

But even if we do devise new modes of personal accountability, another perhaps more vexing challenge is the sheer complexity itself of today’s financial, political, and cultural systems that unintentionally undermine personal accountability. Conflicting laws and regulations, arcane accounting, and byzantine systems of taxation reward those with highly specialized expertise and the knowledge of how to circumvent clumsily cobbled together sets of best intentions of lawmakers and regulators. In a post-industrial society with hyper-connected, highly complex webs of interactions, the chain of personal responsibility gets hidden from view, absorbed into a larger networked set of activities and players. Bad behavior (both intentional and unintentional) is easy to commit and increasingly hard to identify. Responsibility becomes like peanut butter thinly spread across a piece of toast.

As the saying goes, one bad apple spoils the bunch. In like manner, it’s hard to identify which bad peanut caused the batch of peanut butter to spoil. If it’s difficult to even know when we are doing something wrong, and equally hard to find the transgressor, then we are on the cusp of an accountability deficit that limits the progress of civilization. We refer to this phenomenon as the Peanut Butter Paradox: all individuals are acting morally yet the collective behavior leads to utterly immoral results. All the individual peanuts are all doing what they are supposed to do but the entirety of the effort produces rancid peanut butter. Which peanut should be held accountable?

If you want peanut butter desperately, running out to the local supermarket to buy a jar of your favorite brand of peanut butter at the supermarket is more cost-effective than buying raw peanuts and making peanut butter at home. It’s the classic ”make versus buy” decision that we take for granted these days. Admittedly, in a future filled with 3D printers things might change. But for now, with peanut butter, as with most necessities and conveniences of modern life, the “buy” usually beats out the “make” decision handily. The obvious explanation is Adam Smith’s division of labor.

At first blush, Smith seemed to believe the division of labor was the greatest thing since sliced bread. Or at least it was the best way to make sliced bread. If you have managed to get about half way through the 800-odd pages of Wealth of Nations you probably stumbled upon a harsh indictment by Smith of the societal consequences of the division of labor. Smith warns: “The man whose whole life is spent in performing a few simple operations… loses the habit of exertion [of thought] and generally becomes as stupid and ignorant as it is possible for a human creature to become.”

In a world of mass-market consumerism, the production of almost all goods and services entails more and more specialization. The agrarian peanut farmer has yielded to the meta-corporate-military-industrial- peanut butter complex: growing, harvesting, processing, shmushing, packaging, marketing, and distributing this throat-clogging delicacy that touches all aspects of human existence.

Smith nailed down the division of labor to ten or so men with 18 distinct operations for making pins. Together in one factory, they could bang out 48,000 pins in a day versus one man trying to make the entire pin by his lonesome who could scarcely make one pin in a day. In today’s world, it is now almost unfathomable as to the number of tasks, sub-tasks, sub-sub-tasks along with the matrix of “specialists” needed to turn peanuts into peanut butter. So will Smith’s caveat come home to roost? Will the division of labor lead us down a parallel path where we become morally “stupid and ignorant” as well? Through no fault of our own, this might simply be due to the collective loss of thought.

Smith’s example of making pins just isn’t what it used to be. Human activity across the spectrum has become rather more complicated. It feels like everything spews forth from global networks and systems using advanced technology that involve many parties and many processes in far-flung places. There’s a department for everything. Our minds begin to adopt a job shop mentality departmentalizing all aspects of humanity as well– home, work, family, school, charity, taxes, religion, sports, politics etc. We move from one disconnected life department to the next rarely taking the time or the inclination for reflection. Without ongoing reflection, we begin to lose perspective of the greater whole. We deconstruct everything and tend to look at our actions in atomized pieces.

There is a real danger when behavior gets viewed as a deconstructed series of discrete actions. It’s as if we are only examining the actions and intentions of the individual peanut and missing the entire batch of rancid peanut butter. It allows the individual peanut to escape an overall societal accountability by reassuringly whispering to himself:“ but I was only doing my job and I did nothing wrong!” For those with even a rudimentary understanding of quantum physics, the wave-particle duality might be helpful: the peanut is the particle and peanut butter is the wave. And yes, it’s both at the same time.

With an onslaught of globalization and modernity, we construct webs of interconnected nodes, spheres, and linkages comprised of fluid complexes of activity. These layered complexes stretch across endless processes and the entire continuum of principal-agent whether individuals, institutions, companies, or countries, each with their own cultures, languages, contexts, and stakeholders. But as the world just keeps on making more and more peanut butter no one seems to stop to see if the end product is edible. Everyone tends to keep their eyes only on their own silo of activity. But when things go wrong, due to bad behavior, it starts to get pretty sticky. Finding the culprit and bringing him to justice is no easy task. Usually the accusations of an early moral adopter– or whistleblower– against corporate wrong-doing results in the predictably canned response: ”these fanciful charges are without merit and we intend to vigorously defend ourselves against them!”

Governments, businesses and civil society operate through vast webs of organizations and institutions, global delivery networks and value chains where it is increasingly difficult to pinpoint responsibility for good behavior or bad. Historically bad behavior has been held in check in part by traditional accountability technologies (the Seeing Eye, Heaven, Hell, Purgatory, etc.). While admittedly a bit quirky (hey, it’s an off-white paper), it can be helpful to think of these accountability technologies as “moral apps.” Until recently, moral and ethical behavior has been rather effectively delivered through religions and their respective portfolios of moral apps. While a bit of a stretch, a business analogy might be that the major console video game platforms (PlayStation, Xbox and the Wii) have been disrupted by casual games available for free or almost free from an endless selection on iTunes or Facebook. Remember Angry Birds and Farmville? There was even the Confession App cautiously embraced by the Vatican (albeit non-sacramentally) as a “useful tool.” But the numbers undeniably show that religion has seriously begun to lose its grip. Almost 60% of all Christians believe Hell is not a place but merely a symbol of evil. America’s millennials (40%) eschew religious accountability altogether. If accountability has broken down, what new set of moral products and services and delivery systems can help foster good behavior and/or keep people in line?

New accountability technologies will be necessary to fill the gap particularly where religion has left off for non-believers or “non-consumers” in disruptive innovation parlance. But first we must help people recognize what and when they are doing something morally wrong. This will not be easy. It is time to introduce disruption into the domains of religion and ethics to restore Adam Smith’s notion of “moral sentiments.” This requires a new toolkit of disruptive innovations initiated by fearless early moral adopters.

Could Pope Francis be an early moral adopter and be on to something really big? Recently in his catechesis on World Environment Day (and reinforced in his epic Evangelii Gaudium), Pope Francis offered an ethical innovation. Wasting food is stealing: “Remember, however, that the food that is thrown away is as if we had stolen it from the table of the poor, from those who are hungry!” (June 5, 2013) OMG! “Thou shalt not steal” suddenly has a new, expanded meaning and one that not everyone surely will agree with. Is wasting food really theft? For most of human history, where subsistence was the norm, waste wasn’t even an issue. But in a world of abundance will “Thou shalt not waste,” emerge, as Francis suggests, as a modification or a whole new commandment altogether for which we will be accountable? Wasting food as theft just makes the discussion about “what is moral behavior?” a hell of a lot more serious in a culture absorbed with consumerism and materialism. What the justifications are for this new definition of stealing, how we monitor such behavior, and what punishments will be imposed (in this world or the next) for wasting food we cannot say. But it will be damn interesting to find out. But do I really go to Hell for wasting food?

Taco Bell might just have an interesting take on this new definition of stealing. Recently one of their mischievous trainees was photographed licking a stack of taco shells. The photo above was posted on the Internet and then went viral. This amused neither the management of Taco Bell nor its customers. Another version of the story is the ‘playful’ employee was simply licking leftover taco shells used for training to be thrown out afterward. The Pope’s new missive raises the bar: Was Taco Bell was really wasting real food for training that should otherwise be shared with the hungry? Whose moral behavior would Pope Francis be more exercised about– the employee having fun or Taco Bell using real food for training?

Once upon a time life was simple. De Tocqueville keenly observed that it was reward and punishment, augmented by a healthy fear of what would happen in the “afterlife,” that kept the moral compasses pointing north. The American entrepreneur would otherwise exhibit a “ “brutal indifference to futurity.” Being productive might help you get to heaven but you better be good or else you’ll go to Hell. The act of God-fearing was deeply infused into the American psyche. Fear of consequences and personal accountability was a gravitational force that complemented Adam Smith’s Invisible Hand. Smith knew well the danger of liberating greed, ambition, and materialism as the necessary, animating energy of capitalism. Smith’s Theory of Moral Sentiments was his more important book– and the one that most people haven’t read. They tend only to read the first few pages of Wealth of Nations and leave it at that. In TMS Smith proffered a moral philosophy to hold in check personal transgressions and egregious behavior inevitably unleashed by capitalism. In a nutshell, Smith’s moral philosophy of the “impartial observer” is that empathy for others ensures both the production of wealth and a good society.

Over time people have become less God-fearing. The terrifying images of Hell painted by artists such as 16th-century Dutch painter Hieronymus Bosch once spoke to the imaginations, fearful souls and consciences of the masses; today those works of art seem almost quaint. Nearly 30% of Americans under 35 and 20% of all Americans do not believe in the traditional notion of any religious accountability– a God who sees all and rewards and punishes us accordingly. Even among Catholics in the U.S., 99% of whom believe in God, only two-thirds strongly believe in Hell (and it is more likely a symbol, not a place). Curiously, 85% believe there is a heaven (and it’s a place). Go figure. Apparently, moral hazard has evaporated not only for the U.S. Banking system but also for a significant percentage of U.S. Catholics. Be it God or the Federal Reserve, bailouts are available.

This is more important than many of us imagine because a successful market-based economy requires a high degree of trust that is undermined when there is little or no accountability. Studies show that simply hanging a picture of a pair of eyes on a wall will actually change behavior in a room. It’s no accident that “In God We Trust” is emblazoned on our currency. Remarkably though probably unnoticed by most, the Founders built this moral app right into the Great Seal of the United States– they plastered the Divine Seeing Eye right above an unfinished pyramid on the seal. In 1935 FDR embedded the Great Seal on the back of the one-dollar bill. One of those funny Latin phrases, Novus Ordo Seclorum, (trans: New Order of the Ages) possibly helped him market the new deal. But that other funny phrase, Annuit Coeptis (trans: He approves of our undertakings), might serve as a contemporary moral app. Next time you pull out a dollar bill, take a moment and look at the eye on top of the pyramid and ask yourself: “Am I spending this dollar wisely?” Let us know how that works out. This little ritual might help us move from the Divine Seeing Eye into the internalized Seeing I of self-regulation for those with little or no interest in the providential.

There are few models of successful market-based economies without strong religious underpinnings. There are the Singapore and China (still a work-in-progress) models meting out justice swiftly and severely. The Divine Seeing Eye is replaced by a Temporal Seeing Eye that sees everyone and everything conjuring up a brutal Big Brother that most Americans would find unacceptable the NSA notwithstanding. In Singapore, the apocryphal punishment for chewing gum is caning. In reality Singapore does permit chewing gum for medical purposes by prescription only. But caning is alive and well.

In 1994 an American student prankster Michael Fay was enjoying a semester in Singapore; he was convicted of vandalism for stealing road signs and spraying graffiti. The authorities sentenced him to 83 days in jail. More infamously he also received four strokes of a rattan cane on the buttocks. Caning, either for chewing gum or spray painting, would hardly fare well on the scales of political correctness in this country. Fay went on to lead a fairly troubled life but has most likely stopped chewing gum altogether. But in America, it seems neither God the Father nor Government the Big Brother is enough to keep us in line.

But there seems to be a more vexing problem that necessarily precedes the issue of accountability: do people clearly understand if or when they are doing something wrong? In simpler times a good place to start learning how to behave was the Ten Commandments. It seems we’ve gotten a little rusty on the content of those ten imperatives and prohibitions. While 62% of Americans know that pickles are one of the ingredients of a Big Mac, less than 60% know that “Thou shalt not kill” is one of the Ten Commandments. (Then again, ten or so percent of the adults surveyed also believe that Joan of Arc was Noah’s wife.) In fact, the majority of those surveyed could identify the seven individual ingredients in a Big Mac: two all-beef patties (80%), special sauce (66%), lettuce (76%), cheese (60%), pickles (62%), onions (54%) and sesame seed bun (75%). “Thou shalt not kill” only beats out onions. It’s enough to make you cry. “Two all-beef patties, special sauce, lettuce, cheese, pickles, and onions on a sesame seed bun.” In one Big Mac promotion, the company gave customers a free soda if they could repeat the jingle in less than 4 seconds.

A catchy jingle undoubtedly made The Big Mac part of pop culture. Who could forget the 1975 launch of McDonald’s Big Mac? That jingle-laden effort takes second place for “most clever marketing campaign” in advertising history. Yet it was the unsurpassed marketing genius of film producer extraordinaire Cecil B. De Mille that surely takes the cake. De Mille gave the Ten Commandments a special place in contemporary American culture decades before they became fodder for the “culture wars” regarding separation of church and state.

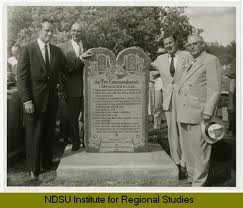

In perhaps the greatest marketing coup in American history, De Mille ingeniously tapped into the angst of Justice E. J. Ruegemer, a Minnesota juvenile court judge who was deeply concerned with the moral foundation of the younger generation. Starting in the 1940s, Ruegemer had, along with the Boy Scouts’ Fraternal Order of Eagles, initiated a program to post the Ten Commandments in courtrooms and classrooms across the country as a non-sectarian “code of conduct” for troubled youth. But De Mille took us to the next level. In 1956 De Mille seized the opportunity to plug his costly epic film, The Ten Commandments. He proposed to Ruegemer that they collectively erect granite monuments of the actual Ten Commandments in the public squares of 150 cities across the nation. The unveiling of each monument was a press event typically featuring one of the film’s stars. “OMG!!! Isn’t that Yul Brynner?” or ”that’s Charleton Heston!” Absolutely brilliant! Things remained copacetic until the 1970s as this public expression of a code of conduct was apparently non-controversial. Then all hell broke loose leading to a firestorm that still burns to this day on the issue of separation of church and state.

“Ten Commandments” as an expression didn’t even exist until the middle of the 16th century when it first appeared in the Geneva Bible that preceded by half a century the King James Bible, first published in 1604. The Ten Commandments have the attributes of a disruptive innovation. They take the hundreds of laws in the bible and boil them down to ten fundamental pearls that are more accessible and usable for the masses. Obviously not covering all the bases they were clearly good enough to get the job done: to create a simple, accessible bedrock for moral and ethical behavior. Serious biblical scholars of the Ten Commandments view the vastly oversimplified code as a synecdoche, or visual metaphor, for the much more complex set of laws and regulations.

With or without the Ten Commandments it seems that most people have internalized “Thou shalt not kill.” Cognitive scientist Steven Pinker points out that, as recently as 10,000 years ago, a hunter-gatherer had a 60% probability of being killed by another hunter-gatherer. At some point in history, the prohibition against murder became a conscious ethical innovation. By the eighteenth century BCE the code of Hammurabi, our earliest surviving code of law, prohibited murder yet the prescribed punishment was based on the social status of the victim. Over a period of several centuries, our moral horizon expanded once again. The biblical commandment “Thou shalt not kill” evolved to eliminate any class distinction— clearly an upgrade.

Ethical innovations come in fits and starts. Yet over the long run ethical and moral horizons of civilization widen. Today, according to Pinker, murder rates are at the lowest in history. In the U.S. there were 13,000 murders in 2010. If our math is correct, that represents less than ½ of one-thousandth of one percent assuming no multiple murders and no suicides are included; about one person in 25,000 actually kills someone. While the numbers are less clear on adultery due to methodological challenges and interpretations (single incident versus multiple incidents, it takes two to tango, etc., etc., etc.), lowball estimates are 20-25% of adults are guilty. Assuming 150 million adults, some tens of millions of Americans have committed adultery give or take a few. It would seem not all commandments are created equal– or at least obeyed equally.

Morality has become more complicated. In simple situations with a “one-to-one” correlation — one person murders another or someone steals a neighbor’s horse, or someone lies in court — we know what they have done is wrong and we hold them accountable. But we live in a society comprised of perpetual power imbalances, multiple stakeholders, ambiguous situations, and complex interdependent systems. Is releasing classified documents as a response to questionable or inappropriate government surveillance heroic or traitorous? The July 10, 2103, Quinnipiac poll, 55% of Americans consider Edward Snowden a whistleblower/hero while 34% believe he is a traitor– a 20% shift in favor of Snowden since the earliest polls. Interestingly, normalized polarized Americans are uniting against the unified view of the nation’s political establishment. The situation has become even more murky, now that Snowden’s activities have been indirectly validated through the Pulitzer Prize. Determining what is right and wrong gets pretty dicey especially when we don’t know what we don’t know. What we do know is: a hell of a lot of Americans really don’t want Big Brother reading their emails. But what are they afraid of?

Does a banker taking advantage of asymmetric information or the sheer complexity of regulations, accounting and tax codes let alone multi-billion dollar obtuse securities constitute unconscionable behavior that deserves punishment? Who is responsible for lung cancer deaths– the smoker, the tobacco company, the tobacco worker or the FDA? Who is accountable for obesity-related deaths– McDonalds, corn subsidies, the overweight consumer or all of the above? Who is accountable for unsafe working conditions in the developing world– Apple, the Gap, Nike, Walmart, the local politicians, the builder– or the consumer who benefits from cheaper prices? Or are these just acceptable “costs of doing business?”

Perhaps we are undergoing the atomization of morality. Has morality has become subject to the “distributed network effect” such that every action can find a morally acceptable pathway of justification? When systems, stakeholders, and situations become complexes replete with interdependencies, conditionality and incalculable unintended consequences moral responsibility gets spread like peanut butter across a chain of activities, persons, corporations, partnerships, not-for-profit organizations, and government agencies. Complicating things further is that every agent has an obligation of loyalty to their principal. Peanut butter consists of a whole lot of peanuts, so which peanut or employee do we hold accountable for screwing up a batch of peanut butter? In like manner, one bad apple spoils the bunch but hard to know which one once it becomes applesauce. How should we parse accountability when there is a complex chain of activities where this atomization of morality allows every individual to avoid taking responsibility for the whole. Often times we have only an intuition that something is amiss or doesn’t feel right even if we can’t exactly put our finger on it. Executive compensation feels like stealing, the selling of tobacco products feels like killing as does serving unhealthy food to the masses. Are these moral intuitions, like Pope Francis’s about wasting food, actually expansions of our moral horizons or just plain silly?

A new awareness of what constitutes moral transgressions must come before accountability can be effectively scaled to help ensure enduring peace and prosperity. This new moral awareness cannot arise without a great deal of civil discourse and yes, even uncivil discourse. Slavery was okay until it wasn’t. Gay marriage was not okay until it was. Smoking in public places was permitted until it wasn’t. Corporate polluters were acceptable until they weren’t. Changing the moral baseline is always painful. Issues of accountability at scale are somewhat premature until we can collectively agree what constitutes right and wrong in an extended or networked chain of “values.” It’s hard to build consensus when everything is so damn complicated with multiple stakeholders, complex systems, and ambiguous situations. Until there is an inflection point– that quantum moment– where enough people can be energized by the intuitions of early moral adopters to see something is terribly wrong and to take action there can be no wide spread accountability.

All this calls for a new breed of moral innovators. It will be these early adopters– gifted with unusual charisma, empathy, and humility– who expand our moral horizon. They might even have to resort to civil disobedience. They will invariably face criticism and grave risks– financial, reputation, and even bodily harm– as they excite a new moral majority– many of whom will not believe in Hell but will embrace the possibility of better “Heaven” on earth.